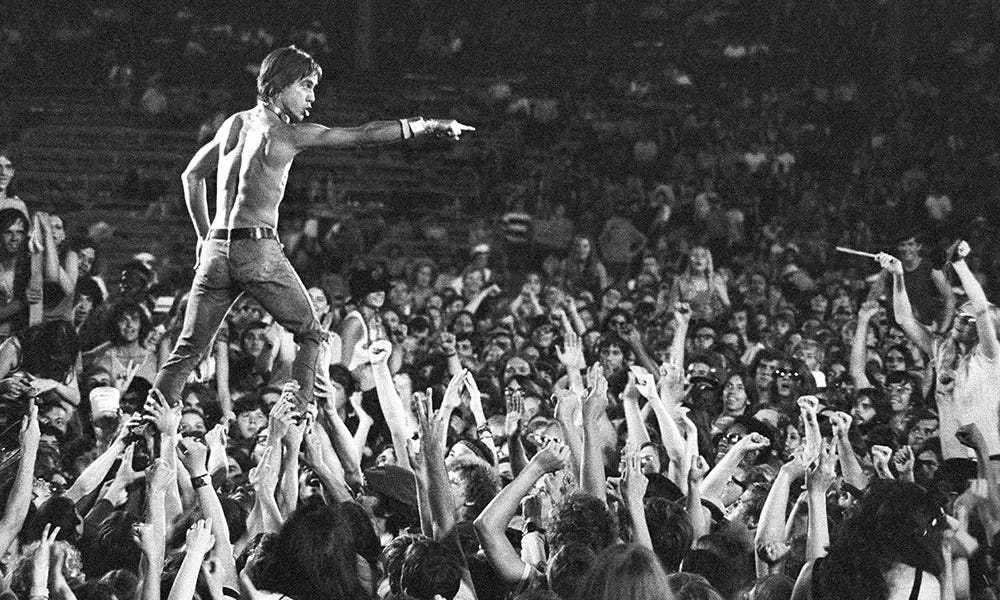

Gimme Danger

How Pro-Nuclear Lobbyists shot themselves in the foot.

Public Law No: 115-439 (01/14/2019) the Nuclear Energy Innovation and Modernization Act (NEIMA) is now on the books.

A key provision of this landmark legislation is found in section C. 103. ADVANCED NUCLEAR REACTOR PROGRAM which reads:

“(2) RISK-INFORMED LICENSING.—Not later than 2 years after the date of enactment of this Act, the Commission shall develop and implement, where appropriate, strategies for the increased use of risk-informed, performance-based licensing evaluation techniques and guidance for commercial advanced nuclear reactors within the existing regulatory framework, including evaluation techniques and guidance for the resolution of the following:

(A) Applicable policy issues identified during the course of review by the Commission of a commercial advanced nuclear reactor licensing application.

(B) The issues described in SECY–93–092 and SECY– 15–077, including—

(i) licensing basis event selection and evaluation;

(ii) source terms;

(iii) containment performance; and

(iv) emergency preparedness”

This risk-informed - performance-based provision explicitly requires that the Nuclear Regulatory Commission (NRC) to employ Probabilistic Risk Assessment (PRA) in the licensing of new Advanced Nuclear Reactors.

That is, for the first time, the Congress of the United States (a collection of STEM-incompetent politicians) has instructed NRC safety engineering experts to use a particular mathematical model to predict public risk when licensing a new generation of nuclear reactors … now, that should scare the hell out of anybody.

So, when did the United States Congress get to be smarter than nuclear engineers at the NRC? Well … never. In fact, Congress was just responding to political pressure from lobbyist representing the nuclear industry.

Just to be clear, congressional staffers don’t have the expertise needed to craft the legislation included in NEIMA. The fact is, NEIMA was written by the Nuclear Energy Institute (NEI) … the nuclear industry’s principal lobbying agent.

The NEI’s objective ??? They believed that by having Congress pass legislation requiring the NRC to accept PRA as a means of gauging the efficacy of protections, the nuclear industry could slip out from beneath the some of the cost burden of the NRC’s General Design Criteria (which they believe is excessive and too restrictive).

Ooops … big problem! While the NEI, the Department of Energy (DOE), the Electric Power Research Institute (EPRI) and a host of industry consultants know how to compute the key efficacy metrics of the PRA methodology (e.g., Core Damage Frequency (CDF), rate of Loss of Reactor Coolant Accidents (LOCA), etc.), they never really knew how to interpret the numbers yielded by PRA.

Exactly how did it happen that NEI, EPRI, DOE, NRC, industry consultants and ultimately the Congress of the United States came to misinterpret the results of PRA?

The genesis of PRA can be traced to Norman Rasmussen, the MIT nuclear engineering professor who authored NUREG 75/014, Reactor Safety Study -- An Assessment of Accident Risk in U.S. Commercial Nuclear Power Plants in 1972. This 1400+ page study was initiated by the Atomic Energy Commission (AEC), predecessor to the NRC. Within the study, Rasmussen and his colleagues presented the computational elements used in constructing a PRA; these elements remain largely unchanged to this day. Legend has it the Rasmussen was a highly skilled poker player … lending to his reputation as competent probabilist.

However, Rasmussen was not a competent probabilist, and his understanding of the stochastic point processes underlying PRA was flawed. Rasmussen believed, for example, that PRA predicted the rate of occurrence of core damage and loss of cooling accidents should be interpreted as numerical constants. But, Rasmussen was unfamiliar with Palm probability or the Martingale calculus as it applies to stochastic counting processes.

When studying the occurrence of core damage events, the martingale calculus reveals the very impractical assumptions required to ensure the existence and uniqueness of the CDF numerical constant reported by PRA.

Obviously, any constant characterizing risk requires stochastic stationarity (i.e., the rate of occurrence of core damage events is not changing over time).

Hence, PRA must rely on some unidentified convergence theorem. But, there is no engineering justification for believing that the rate of core damage events should be constant since the eventual discovery of as yet undiscovered accident scenarios (e.g., due to climate change) is always a reasonable anticipation. Thus, for all possible reactor life cycles, CDF almost surely under counts the number of core damage events. Worse yet, even when the very unlikely conditions are satisfied under which the core damage rate will converge to a constant, the Palm calculus reveals that the true rate of occurrence of core damage events converges to CDF only when the occurrence of these events is completely independent of all operations history … which implies that reactor maintenance is useless for improving safety … which is, of course, ridiculous and again leads to an optimistic bias in CDF.

By the time that NUREG 75/014 appeared in 1972, characterization of counting processes through the Palm-martingale calculus had been well known within the telecommunication engineering community for more than a decade.

Telecommunications experts have understood since the 1940’s that the behavior of predicted behavior of engineered systems when observed at random times (e.g., the time of stressful event that if unchecked would lead to catastrophe) is not the same as would be predicted at ordinary times (as PRA does) except in very special stochastic circumstances.

By 1972, the analytical principles explaining this discrepancy was complete and available in the open literature. The long and short is that Rasmussen’s reliance on seat-of-the-pants mathematics should have been recognized and rejected by the nuclear safety engineering community before PRA ever gained traction.

What is clear, today, is that a declining nuclear industry sought to gain a cost-reducing financial advantage by snookering the U.S. Congress into passing NEIMA which forced the NRC to evaluate the efficacy of regulated safety-critical protections through the lens of risk-informed, performance-based analytics … i.e., Rasmussen’s PRA. They now face the consequences of legislation, which they crafted, that in practical terms specifies that advanced reactor licensing must include risk analytics that emphasize computing a lower bound on key safety-efficacy metrics … that is PRA yields “at least this bad” risk assessments.

Consider, for example, if a PRA yields a value of CDF = 0.01/reactor-year, one should correctly interpret this figure of merit to mean: “With CDF = 0.01/reactor-year, in the long run the nuclear industry is going experience at least one major accident every 100 years with the actual accident rate likely being VERY much higher … and no one can really know how frequent or how costly to the public all of these accidents will be.”

That is, CDF doesn’t tell you anything about adequacy of reactor protections ... rather, CDF gives you a best case scenario on how bad reactor protections are … the exact opposite of NEIMA’s claim.

Thus, the civilian nuclear power industry has spent many billions on the development of PRA software, selling risk-informed, performance-based regulation to politicians, and marketing nuclear energy as climate change solution … that in reality hands anti-nuclear activist an unimaginably potent legal weapon.

The price of arrogant-ignorance is high. A likely consequence of NEIMA is that smart anti-nukes will use it to impede nuclear energy in portfolio technologies needed to combat climate change. NEIMA would have been more appropriately named the Gimme Danger Act.